The network fabric built for mission-critical AI

As AI and machine learning reshape operations across U.S. federal missions, Nokia Federal Solutions delivers the ultra-scalable, secure, and automated switching architecture needed to power the next generation of data center fabrics. Built on Nokia’s proven technology and adapted for mission-critical environments, our data center switching portfolio meets the performance, resilience, and sovereignty demands of U.S. federal AI workloads.

Whether supporting GPU clusters for AI model training or building real-time inference platforms for tactical decision-making, Nokia Federal provides switching solutions that operate with precision, at scale, and with confidence.

On this page

Smarter networks for smarter missions

AI-optimized leaf-spine and super-spine switching

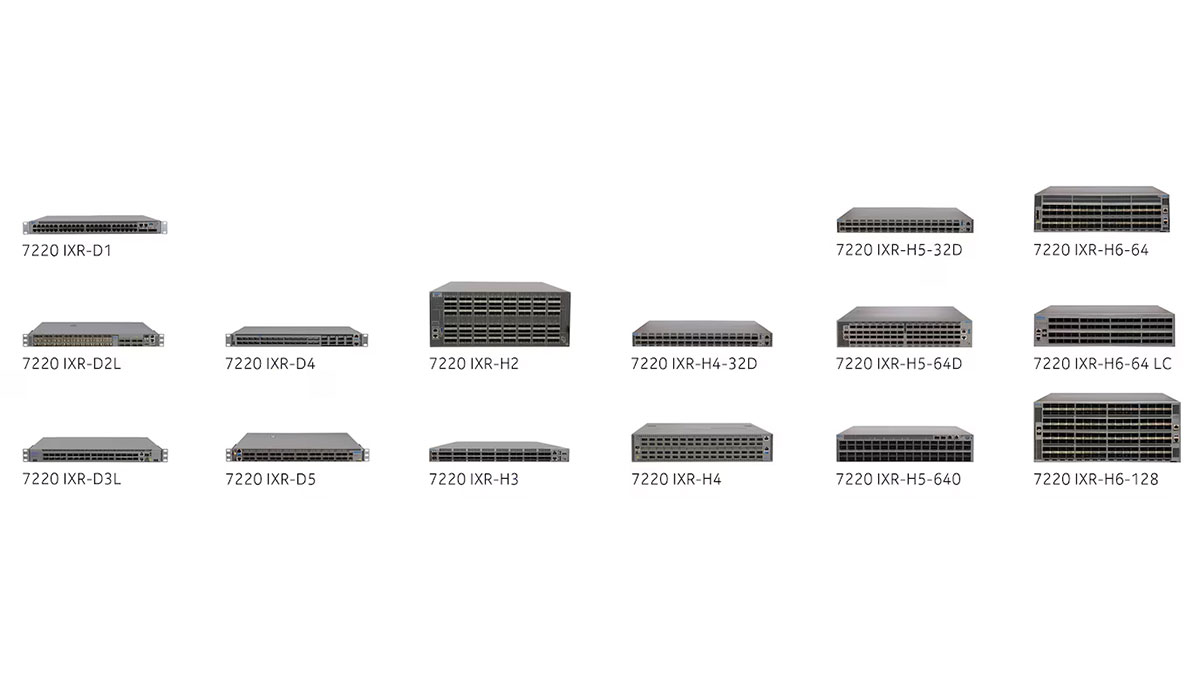

Nokia’s data center switching fabric enables ultra-low latency, lossless performance and high throughput required for AI model training and real-time inference. Our modular and fixed platforms are designed for seamless integration with high-density GPU infrastructure.

Open, automated, and programmable at every layer

Built on Nokia SR Linux, our network operating system delivers advanced programmability, native telemetry, and support for open standards including SONiC. Combined with our Event-Driven Automation (EDA) framework, agencies can dramatically reduce manual tasks and human error.

Scalable switching for high-capacity workloads

With platforms delivering up to 102.4 Tb/s and 1.6 Tbps interfaces, our portfolio scales to support the densest AI workloads across multi-site data center environments with power efficiency and fu-ture-ready capacity.

Built for sovereign, mission-critical environments

Our switching solutions are engineered for compliance, supply chain assurance, and operational resilience. Deployed by cleared personnel through Nokia Federal, these solutions ensure control and trust in every deployment.

Innovative switching solutions for AI data centers

High-throughput, lossless fabrics for AI model training

Enable high-performance data movement between GPU nodes to reduce training time and accelerate mission outcomes.

Resilient fabrics for real-time inference and decision support

Support latency-sensitive workloads critical for national security, cyber operations, and autonomous systems.

Unified automation and operations

Leverage intent-based, event-driven automation for Day 0 to Day 2+ operations with digital twin simulation, change validation, and secure automation pipelines.

Open ecosystem integration

Support open standards and APIs to integrate with orchestration, monitoring, and workload placement tools across hybrid and multi-cloud environments.

Intelligence and defense AI model training

Scale-out fabrics supporting hundreds of GPU nodes and petabyte-scale data movement.

Edge-to-core inference fabrics

Support distributed inference workloads across field, base, and centralized facilities with consistent performance.

Secure, sovereign cloud deployments

Build switching architectures with control over supply chain, software, and automation to support trusted federal cloud environments.

Mission operations and real-time analytics

Enable real-time pattern recognition and situational awareness for mission-critical applications.